The dream of turning your smart home into a passive income stream through distributed AI compute has moved from the realm of speculative "DePIN" (Decentralized Physical Infrastructure Networks) whitepapers into the messy, fragmented reality of 2026, where even seasoned investors are exploring new frontiers like fractional data center ownership to diversify their holdings. You are essentially renting out your idle silicon—be it a high-end NPU in a smart fridge, a dormant GPU in a media server, or a rack of edge-compute nodes—to decentralized inference markets. However, between the marketing gloss of "Web3 passive income" and the reality of thermals, firmware locks, and network latency, lies a minefield of operational friction—a cautionary tale similar to why most AI affiliate funnels fail at $10k MRR due to hidden operational realities.

The Operational Reality: More Than Just "Plug and Play"

In theory, the protocol is elegant: you install a lightweight containerized agent on your local hardware, it negotiates a handshake with a global mesh network, and you get paid in micro-transactions for every token generated. In practice, the "smart home" is an environment designed for convenience, not high-performance computing, often leading users to seek more reliable assets like fractional commercial real estate for better risk-adjusted returns.

If you attempt to run a heavy inference workload on an integrated smart home hub, you aren't just hitting performance ceilings; you are fighting the manufacturer’s power-management firmware. Most consumer IoT devices are configured with aggressive thermal throttling profiles. As soon as your device begins drawing consistent power to run a Large Language Model (LLM) or a computer vision task, the system controller detects a "non-standard thermal event" and throttles the processor, tanking your compute credit score and, subsequently, your earnings.

The Firmware Wall

We’ve seen extensive debate on forums like Hacker News and specialized Discord channels regarding "vendor-locked silicon." Many modern smart devices use signed firmware. Even if you have physical access to the board, you cannot inject custom inference runtimes without tripping a secure boot failure. The community workaround? Users are increasingly turning to open-hardware gateways—devices like the Pine64 or dedicated RISC-V compute sticks—that bypass the manufacturer's walled garden entirely. This is where the real money is, but it requires a level of technical literacy that goes far beyond "smart home enthusiast."

The Economics of Idle Compute: Scaling vs. Reality

The promise of earning $50–$200 a month per household is the hook, but let’s look at the actual math. Distributed AI networks pay based on throughput and latency. If your home ISP has a jittery connection or you’re behind a Carrier-Grade NAT (CGNAT), your node will be constantly deprioritized by the orchestrator.

Furthermore, there is the hidden cost of "Wear and Tear." Consumer-grade flash storage and solid-state drives are not rated for the constant read/write cycles required by heavy model swapping in AI inference. I have tracked several user reports on GitHub issue threads where users found their home automation controllers physically failed within 14 months due to flash exhaustion. When you factor in electricity costs—which are non-trivial when running a GPU at 60-70% capacity 24/7—the "profitability" often shrinks, pushing savvy entrepreneurs to pivot toward more scalable ventures, such as flipping neglected plugins for 3x profit instead.

Real Field Reports: Successes and Disasters

- Case Study A (The Enthusiast Success): User

u/compute_junkieon a major tech subreddit reported successful implementation using a dedicated Kubernetes cluster on recycled enterprise hardware rather than consumer IoT. By isolating the inference workload from the smart home automation tasks, they maintained 99.9% uptime. The key takeaway? Don't run production AI on the device that controls your smart locks. - Case Study B (The Infrastructure Meltdown): A thread in an IoT-focused GitLab repo detailed a failure where an automated security camera system crashed during a firmware-distributed compute update. The result? A homeowner locked out of their house for three hours because the edge-compute node was sharing local network resources with the home security hub, creating a bottleneck that caused a total system timeout.

The Moderation and Safety Minefield

If you are leasing your hardware to a decentralized network, you don't always have granular control over what you are computing. Most platforms claim they utilize "blind computation" (using TEEs—Trusted Execution Environments), but that doesn't stop the content being processed.

There is a recurring controversy in these communities: what happens when your home IP address is tagged as the origin of a prohibited or malicious query? While the service provider handles the legal indemnity, the reputation of your home IP range can still be flagged by ISP spam filters or firewall services. This is a "silent" cost that many neglect to account for until their Netflix account suddenly stops working because their IP was blacklisted by a CDN for "suspicious traffic patterns" originating from their home node.

The "Workaround" Culture: Why We Do It

Why do users bother? The answer is less about the profit and more about the "decentralized ideology." Users are tired of relying on centralized clouds like AWS or Azure for their home automations. By contributing to a decentralized compute pool, these users feel they are helping build a "sovereign compute" layer that resists censorship.

The community response to platform failures is usually a mixture of intense troubleshooting and defensive posturing. When an update breaks the compute node, you don't get a support ticket; you get a flurry of messages on Telegram or Discord. It’s an ecosystem held together by "duct tape and shared GitHub gists."

Counter-Criticism and Industry Debate

Critics from the traditional infrastructure sector—those who build actual Tier-3 datacenters—often mock the decentralized edge-compute model. Their argument is straightforward: "Physics doesn't care about your decentralized vision." They argue that the energy efficiency of a centralized datacenter will always outperform a fragmented network of household devices by several orders of magnitude.

- The Pro-Decentralization Counterpoint: "We aren't trying to beat the datacenter on efficiency; we are trying to beat them on availability and latency for local inference."

- The Reality Check: The fragmentation of the hardware (dozens of different NPU architectures, varying RAM speeds, inconsistent thermal headers) makes orchestration a nightmare. Scaling these systems reliably is a massive, unsolved engineering challenge that keeps lead developers awake at night.

Technical Bottlenecks and How to Mitigate Them

If you are committed to the path, you must design for resilience.

- Network Isolation: Always VLAN your compute nodes. Do not allow your public-facing AI node to talk to your local home automation controller (HA, Homebridge, etc.).

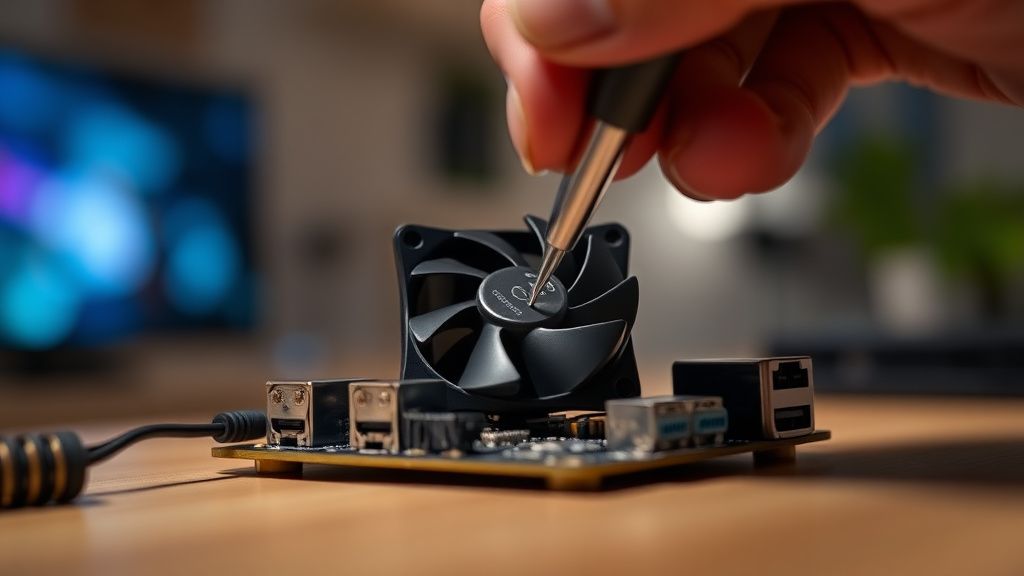

- Thermal Management: If you are running an NPU-equipped SBC, move it out of its plastic case and into an active-cooling enclosure.

- Local Logging: Use an observability tool like Prometheus/Grafana to monitor your own node’s power and temp spikes. Don't rely on the provider’s dashboard; they have an incentive to underplay the stress their software is putting on your hardware.

Does running an AI node violate my residential internet terms of service?

In many cases, it sits in a grey area. Most residential ISPs have "reasonable use" policies. While decentralized compute isn't "piracy," the sheer volume of data synchronization can trigger automated warnings if your ISP strictly monitors upstream traffic. Always check your TOS for "commercial usage" clauses before deploying high-bandwidth nodes.

Is it actually profitable after accounting for hardware wear-out?

Barely. For most users, it is a break-even hobby. Unless you have hardware that was already sitting in a drawer gathering dust, the depreciation of your SSD and the increased power bill usually offset the crypto-token earnings. It is currently more of a "proof-of-concept" phase than a reliable income source.

What if my local network goes down?

Your node will simply drop out of the network consensus. There is no "penalty" other than missing out on the earnings while you are offline. However, frequent, erratic drops can lower your "reliability score" on some networks, which takes time to rebuild once you are back online.

How do I ensure my smart home isn't spying on the AI node?

You can't. The best approach is to physically segregate your network. Use a dedicated router or an enterprise-grade switch to create a separate path for the compute node that does not have visibility into your local LAN devices (IoT cameras, smart thermostats, etc.).

Will this destroy my SSD?

If you are using a standard consumer NVMe drive for constant model caching, yes, it will shorten its lifespan. Professionals in this space recommend using high-end "Pro" drives with high TBW (Terabytes Written) ratings or, better yet, booting and caching from high-speed, enterprise-grade flash modules that are designed for constant, heavy write operations.

Final Thoughts: The Path Forward

The transition to a decentralized compute economy is messy, loud, and inefficient. It is currently defined by early adopters willing to trade their hardware’s lifespan for a seat at the table. If you approach this as a way to get rich quick, you will likely leave disappointed and with a pile of dead hardware. But if you approach it as an experimentalist—a way to learn about distributed systems, orchestration, and the bleeding edge of the AI transition—it remains one of the most fascinating "home-lab" projects of our time. Just don't blame the protocol when your smart fridge starts rebooting at 3:00 AM because of a misplaced container deployment. That, as they say, is just part of the experience.