Monetizing local LLM infrastructure in 2026 is less about the "AI gold rush" and more about solving the "inference gravity" problem, a shift often compared to the strategic evolution seen in How to Build a Sustainable $15k/Month AI Automation Agency by 2026. As model weights become commodity, the value has migrated from the model to the host. If you are sitting on idle hardware, consider the practicalities of Is Renting Your GPU for AI Worth It? The Realities of DePIN Mining to manage these compute assets, as businesses increasingly demand local hosting for data sovereignty.

The Shift from Model API to Bare-Metal Inference

The primary fallacy of 2024-2025 was the belief that "owning the model" was the endgame, a misconception that mirrors the transition discussed in The Future of DeFi Yield: Why 2026 Strategy Is Moving Beyond Staking. By 2026, the industry has realized that the cost of inference at scale on centralized API providers (OpenAI, Anthropic) is a variable-cost trap. CFOs are tired of usage-based billing spikes.

Local infrastructure monetization now pivots on the "Sovereign Inference" model. Businesses are willing to pay a premium for a private, dedicated "VPC-like" LLM environment, a secure approach similar to how DeFi vs. Private Credit: How Institutional Investors Are Balancing Yield in 2026 manages risk for institutional stability. This is your wedge. You are not selling a chatbot; you are selling hardened, latency-guaranteed inference uptime.

Tiered Monetization Strategies: Beyond the "Pay-per-Token"

If you try to compete with massive cloud providers on a pure pay-per-token basis, you will lose, much like how businesses that fail to diversify their supply chains might suffer, as outlined in Why E-commerce Giants Are Ditching Warehouses for 3D On-Demand Manufacturing. They have economies of scale in cooling, electricity, and chip procurement that you cannot match. Your strategy must focus on the "operational friction" they ignore.

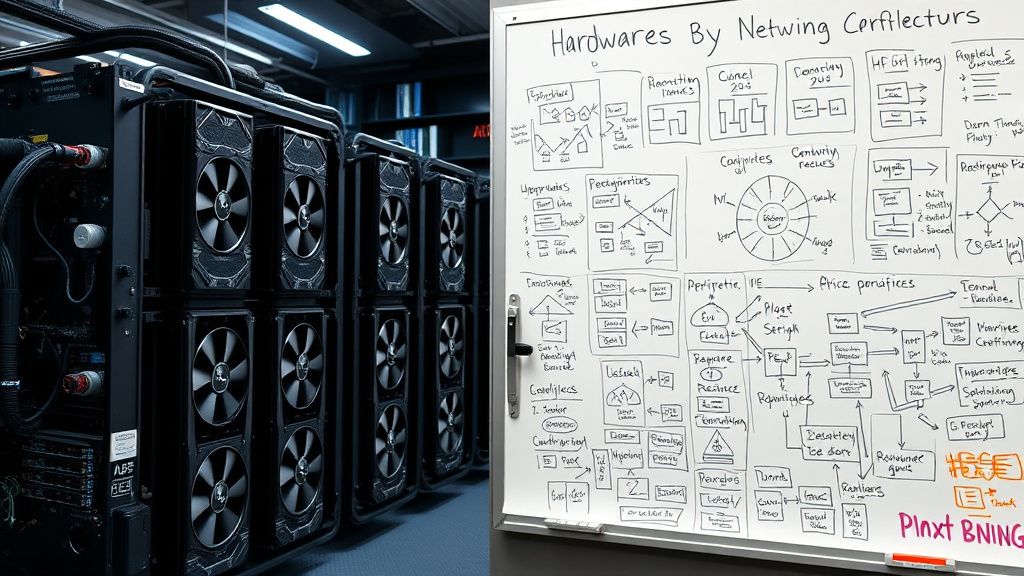

- The "Dedicated Partition" Model: Instead of selling tokens, sell physical slices of time. Enterprise clients want a guarantee that their fine-tuned Llama-4 or Mistral variant has 99.9% availability on dedicated silicon. They don't care about the total number of tokens; they care that their RAG (Retrieval-Augmented Generation) pipeline doesn't latency-spike during their internal "all-hands" meeting.

- The "Inference-as-a-Service" (IaaS) Wrapper: Provide the API layer that sits between your local hardware and their internal app. You handle the VRAM management, the quantization strategy (e.g., dynamic EXL2 switching based on demand), and the telemetry logs.

- The Compliance-First Gateway: This is the most lucrative. Financial services and healthcare sectors are barred from using public LLM APIs due to privacy concerns. If you can provide a "Hardened Local Gateway" with SOC2-compliant logging and air-gapped options, your price-per-hour can be 3x to 5x higher than commodity cloud providers.

Operational Reality: The "Broken Pipeline" Problem

Scaling local infrastructure is a nightmare of dependency hell, requiring the kind of strategic foresight found in Why More Startups Are Trading Full-Time CEOs for Fractional Leadership. You will spend 20% of your time on AI and 80% on managing the "death by updates" cycle.

- The CUDA/Driver Trap: Every time a new version of

llama.cpp,vLLM, orTensorRT-LLMdrops, there is a high probability that your carefully tuned performance settings will break. In the field, maintainers often resort to "version pinning" everything—including kernel versions—and treating their inference nodes like museum exhibits. - The VRAM Bottleneck: You will inevitably have a customer who wants to run a model that just barely fits into your VRAM. The moment they attempt a batch size increase,

OOM (Out of Memory)errors will cascade through your system. You must implement aggressive request-queueing middleware. Do not trust the frameworks to handle pressure; build your own circuit breaker.

Case Study: The "Mid-Sized Boutique" Failure

In late 2025, a startup attempted to monetize local clusters by offering "GPT-4 performance for 1/10th the cost." They failed within six months. Why? They treated local LLMs as a static service.

They didn't account for the "Drift in Model Performance." As they updated their base models to satisfy client requests for "smarter" output, they inadvertently changed the token distribution of their fine-tuned adapters. Their clients' downstream RAG pipelines broke because the output format shifted by a few newline characters or JSON schema variances.

The Lesson: Monetization is not about the model; it's about the Contractual Consistency of the API response. If you change the model version, you must provide a "versioned endpoint" that stays immutable for at least six months. Clients will pay to keep a "dumber, consistent model" over a "smarter, breaking model."

The "Workaround" Culture: How Customers Actually Use Local LLMs

In real-world enterprise environments, nobody is just calling an API. Users are building chaotic RAG pipelines that ingest PDFs, messy SQL dumps, and Slack archives. Your infrastructure will be pounded by non-optimal queries.

- Caching is the Revenue Maximizer: Implement semantic caching. If a client asks a question about their company's "Holiday Policy" for the tenth time, don't re-run the inference. Serve it from the cache. This isn't just for speed; it's to keep your hardware cool and extend its life.

- Prompt Injection as a Service (PIaaS): This is a niche but profitable security service. Offer to wrap your client's prompts in a robust "Guardrail" layer. Use a secondary, tiny model (like a distilled Phi-3 or Qwen-1.5B) solely to classify the input for security before passing it to the heavy-duty model.

Counter-Criticism: Why "Local" Is Often a Liability

There is a loud contingent on platforms like Hacker News that argues "Local LLMs are a hobbyist's fantasy." They point to the "Maintenance Tax."

- The Argument: Maintaining a rack of GPUs is a CAPEX-heavy, talent-draining vortex. By the time you’ve optimized your kernel and latency, NVIDIA has released a new chip that makes your previous-gen hardware obsolete, forcing you to write off your investment.

- The Reality: They are right about the hardware churn, but they miss the "Data Gravity" point. Data is heavy. Moving massive, sensitive, proprietary datasets to a public cloud creates egress cost nightmares and security risks. You aren't selling the speed of the GPU; you are selling the proximity to the data.

The "Scaling" Wall: When Things Actually Break

Once you move from 1 node to 50 nodes, the management overhead explodes. You will encounter:

- Thermal Throttling at Scale: Your monitoring software will show 95% GPU utilization, but your actual throughput will be 60% of theoretical because the cards are throttling to prevent fire.

- Network Partitioning: In a multi-node inference setup, the interconnect between nodes (InfiniBand vs. Ethernet) becomes the primary failure point. If you aren't using an RDMA-capable fabric, your distributed inference will crawl.

- The "Support Nightmare": Clients will complain that their local app is "slow." It won't be your API; it will be their terrible client-side code that makes 50 serial calls to your API instead of one batch request. You need to implement strict rate-limiting and quota-management features, or one bad developer will destroy your cluster's performance for every other customer.

The Human Element: Building Trust

If you want to survive the 2026 shakeout, you must build a reputation for "boring reliability." Every time an AI company "hallucinates" or their API goes down, it is an opportunity for you to position your private infrastructure as the adult in the room.

Write detailed post-mortems for your outages. Even if you don't have clients yet, publish them on your engineering blog. Show the "broken tape" holding your backend together. Transparency is the only currency left in an industry flooded with "Black Box" AI marketing.

How do I handle the hardware depreciation cycle in my pricing?

You shouldn't charge for the "GPU." You should charge for the "Token-Hour" or "Concurrent Request Slot." Calculate your total cost of ownership (TCO) over 24 months, including electricity and the inevitable hardware replacement, and divide that by your projected utilization. Add a 40% margin for "operational stress." If the math doesn't work, don't rent the hardware; lease it.

Is it really possible to compete with OpenAI or Anthropic?

Not on raw performance per dollar. But you can compete on compliance, data privacy, and reliability. If a bank needs to summarize thousands of loan documents, they will never use a public API. They will pay you for a secure, local pipeline. That is your niche.

What is the biggest mistake newcomers make in this space?

Over-engineering the software and under-engineering the cooling and power delivery. Many beginners think they can run a cluster out of a back office. When the ambient temperature hits 30°C and the power spikes during a massive batch inference, the whole system will drop. Focus on the physical layer first.

Why does my local inference feel slower than the cloud, even with high-end hardware?

Latency is often about how you batch your requests. If you aren't using

vLLMorTGIwith continuous batching, you are wasting hardware cycles. Also, check your quantization. If you're running FP16 when your application only needs 4-bit or 8-bit, you're burning memory bandwidth for no perceptible gain in output quality.

Should I offer "Fine-tuning" as a service?

Proceed with extreme caution. Fine-tuning is a black hole of client expectations. They will expect a fine-tuned model to magically understand their business domain better than it actually can. Sell the "Inference Hosting" first. Only offer fine-tuning if the client is willing to pay for a dedicated data scientist on your team to manage the training runs.